I previously wrote about how we shipped over 100 versions a day across more than 25 environments. This was when we had around 9 million lines of Python. A year on, a lot has changed.

This post is specifically about Kraken Customer, our core CRM platform for utilities. Other parts of Kraken use different architectures (more on that below).

This update was partly prompted by Vincent Driessen’s 15 years later post, in which he revisited his classic git-flow article. It’s a good read, though for the wrong reasons.

Microsoft had fed his original diagram through an AI image generator and published the result on their official documentation portal, without credit. The output was a garbled mess. The text “continuously merged” had become “continvoucly morged”. A sombre illustration of what careless AI use looks like in practice.1

We do use AI heavily in our development workflow, but we try to use it thoughtfully. This post covers how the codebase and pipeline have evolved, how we think about AI as a development tool, and how it fits into the product itself.

Scale

The core of Kraken is still a monolithic Django application in a single git repository (mono-repo), but it has grown a lot. We now have almost 15 million lines of Python code, more than 700,000 commits and over 250,000 PRs merged into the main branch.

Why not microservices?

Other parts of Kraken do use microservices. Kraken Flex, our grid flexibility and optimisation platform, is built as microservices, which suits its different scaling and deployment characteristics.

For Kraken Customer, a well-structured monolith is the right tool for the job. A monolith with a trunk-based development workflow lets us move fast, share code easily and keep the system coherent. Microservices introduce distribution complexity, network latency and the overhead of coordinating releases across many independent services. For a fast-moving team working on something as interconnected as customer and billing management, those costs are hard to justify.

One well-tested, continuously integrated version beats many loosely coordinated ones.

We have split out some services where it made sense though. Market integrations are a good example: the interfaces to energy markets have distinct deployment and compliance requirements, and separating them from the core monolith gives us cleaner boundaries and more targeted scalability. Some of these integrations have cursed constraints. For example, one only operates during office hours because the counterparty communicates by fax.

Code quality

Maintaining quality at this scale requires deliberate investment in tooling, discipline and automation.

Static typing

Our static typing coverage with mypy has come a long way. See the detailed write-up for the full story.

With over 2,000 Django ORM models and millions of lines of code, getting mypy to work well with Django required significant investment. We now catch type errors much earlier, which has reduced production incidents and made large-scale refactoring safer.

Staying on the latest Django

We run on Django 6.0.3, the latest stable release.

Keeping up with major framework versions is never easy (or without incident) at our scale, but it is worth it. Staying current means we get security patches, performance improvements and new features as they land. It also avoids an ever-growing version gap to close all at once.

We are a Platinum Corporate Member of the Django Software Foundation and a Supporting Sponsor of the Python Software Foundation. Django and Python are fundamental to our business and to the clean energy transition work we do, so sponsoring their development is the right thing to do. We also sponsor a number of other open source projects that we depend on. Additionally, we host meetups and send engineers to conferences.

Keeping the test suite green

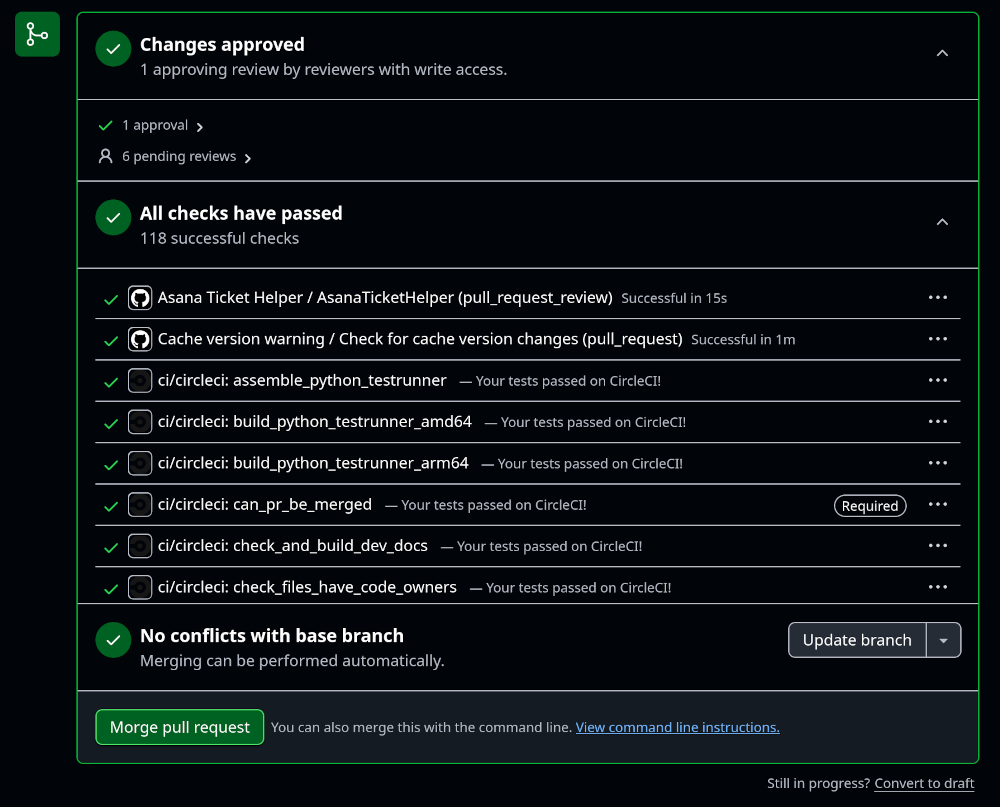

With more than 100,000 automated tests running against every merge, keeping CI green is an ongoing effort. Flakey tests, implicit merge conflicts and the occasional broken import are everyday occurrences at our scale.

We have tooling to alert developers quickly when something breaks in the main branch and bots that help keep the queue moving. More on the overall CI and deployment pipeline in the previous post.

Infrastructure

We completed a full migration to K8s a couple of years ago. There are details on our K8s setup in this post.

Running on K8s also gives us portability, on top of the operational benefits (rolling deployments, independent worker scaling, GitOps-based configuration management).

Staggered deployments

Rather than deploying to all production environments at once, we now roll out to a subset first and watch for problems before continuing. We get an early signal if something is wrong and can limit the blast radius of a bad deploy. We are constantly reevaluating this approach. The operational overhead and the latency it adds to the deployment pipeline are real costs, and we want to make sure the safety benefits justify them at our current scale.

Sovereignty and carbon

Portability matters for two reasons: sovereignty and sustainability.

Some of our clients, and the governments of countries where they operate, have requirements about where critical infrastructure can run. They don’t want their systems hosted somewhere outside their control, such as with a US cloud provider subject to US law. K8s and containerised workloads make it much easier to move between cloud providers and regions to meet these requirements. AI tools also make cloud migrations much easier, or at least palatable.

The other reason is carbon intensity. The carbon footprint of running a large distributed system is significant2 and varies considerably by region. France and Sweden, for example, have very low grid carbon intensity thanks to nuclear generation. By being able to move workloads to lower-carbon regions, we can reduce our operational emissions. Another consideration is fresh water usage. Data centres in colder climates can use outside air or closed-loop systems for cooling rather than evaporative cooling, which uses a lot of potable water.

Package repository caching

We proxy and cache external package repositories (PyPI, npm and others) internally. This reduces our dependency (and lowers the strain) on public infrastructure, improves build and deployment performance by serving packages from a local cache, and gives us an additional layer of security (we can audit packages before they reach developer and CI environments).

Self-hosted CI runners

We are investigating moving to self-hosted CI runners. Early indications are promising: lower costs, better performance and the ability to choose where compute runs (which matters for carbon intensity). The main thing to work through is latency, as the orchestration and control plane is still in a high-carbon region, so we need to understand whether the overall picture improves before committing.

Development workflow

The fundamentals haven’t changed: trunk-based development, continuous deployment, fix forward. What has evolved is the tooling around that workflow.

Trunk-based development

As described in the previous post, every change merges directly into the main branch.

With dozens of PRs merging every day, rebasing on the absolute latest main before merging is often not practical. We have bots to remind developers to rebase if their branch is too far behind, and mechanisms to force a rebase when breaking changes land. After a PR is approved, we also check that the diff has not changed (yet allowing for rebases), to catch cases where changes were made post-approval.

We fix forward rather than rolling back wherever possible. If an implicit merge conflict sneaks through, the goal is to detect it fast and push a fix, not to revert.

Stale PRs

We recently started automatically closing stale PRs. With over 250,000 PRs merged and a fast-moving codebase, an abandoned branch rarely improves with age. Closing stale PRs keeps the queue manageable and nudges developers to either finish work or consciously defer it, rather than letting it quietly rot.

Pre-commit hooks

We make heavy use of pre-commit hooks. Catching formatting issues, lint errors and other problems before a commit is pushed means fewer CI failures and less review noise. With a codebase of this size and this many contributors, shifting checks left is worth the investment.

For example, we run a pre-push hook that checks whether the branch needs a rebase before pushing. If a new high water mark has been merged then the push is blocked and the developer is prompted to rebase first. This avoids triggering a full CI run on a branch that will be rejected at merge time anyway, which at our scale adds up to a meaningful saving in compute, cost and carbon.

AI in development

We use tools like Claude and Cursor across the development workflow: writing code, generating tests, debugging and refactoring. This section covers how we think about code quality and responsibility, what we can say about productivity, and how we approach the carbon footprint of running inference.

Code quality and responsibility

AI-generated code carries the same quality bar as any other code. Every change requires a human code review, regardless of how it was written. The developer who pushes the code is responsible for it and another human must check it.

The most visible failures of AI-assisted development have come from teams treating LLM output as inherently correct, or skipping review because the code “looks right”. Slop is a real risk: plausible-looking code that passes a quick read but contains subtle bugs, security issues or misunderstood requirements. The antidote is the same as it always was: careful review, good tests and developer ownership.

Productivity

Engineers report that LLM tooling has sped up development, particularly for boilerplate, test generation and refactoring. We don’t have hard evidence that it improves overall throughput; the productivity gains are self-reported and there may also be selection bias. The codebase has grown by more than 50% over the past year, but we would not attribute that to AI alone.

Where we run inference

LLM API calls are often less latency-sensitive than application workloads, which gives us more flexibility about where inference runs. Unlike production workloads, which need to be close to clients for sovereignty and latency reasons, we can route inference to providers in lower-carbon regions like Europe.

The energy cost per LLM query is actually relatively small (around 0.3 Wh for a typical text prompt, and falling fast as models become more efficient3). Globally, data centres are a modest share of total electricity demand growth, but the real issue is geographic concentration, with data centres accounting for 17% of Ireland’s electricity demand and over 10% in several US states. Choosing where inference runs matters, both for carbon intensity and for local grid pressure. We also avoid reaching for LLMs where a simpler tool would do.

AI in the product

For the Kraken product, traditional ML and generative AI (LLMs) play quite different roles.

For most of our product AI, we use traditional ML rather than LLMs. ML models are typically far more energy-efficient than LLMs for inference, and fit the optimisation problems we solve better. For example, our ResiFlex smart-charging and flexibility optimisation (including deciding when to charge EVs, dispatch batteries and flex heat pumps) relies on ML-based optimisation rather than generative AI. That means we can run these workloads for hundreds of thousands of devices without the energy cost of LLM inference.

LLMs do have a role in some features. For example, Kraken’s Magic Ink feature uses AI to help with customer communication.

We’re in the business of accelerating the clean energy transition, so how much energy we consume is more than just an operating cost.

Conclusion

A lot has changed since the last time I wrote about this, a little over a year ago. The codebase has grown by more than 50%, our K8s migration has matured and given us flexibility over where we run, and AI tooling has become part of the everyday development workflow. However, measuring its impact remains an open question.

The core approach hasn’t changed: trunk-based development, continuous deployment, fixing forward and keeping the system coherent. The tooling around that has got a lot better, including the use of “AI”.

This post was also written and reviewed with a little AI assistance. 😁

-

The term Microslop has been coined for this kind of output. Microsoft’s response was to block the word on its Copilot Discord server, which had the predictable Streisand effect of spreading it more widely. The same dynamic played out when Palantir’s legal pressure drew far more attention to Republik’s investigation into its pursuit of Swiss government contracts than the original article would otherwise have received. ↩

-

According to the IEA’s Energy and AI report (published April 2025), global data centre electricity consumption was around 415 TWh in 2024 and is projected to more than double to ~945 TWh by 2030, driven primarily by AI. The 2035 base case reaches ~1,200 TWh. ↩

-

Google’s published analysis of Gemini estimates around 0.24 Wh per median text query, a 33-fold improvement over 12 months. Independent estimates for ChatGPT converge on a similar ~0.3 Wh figure. Video generation is an order of magnitude more expensive and is the category worth scrutinising more carefully. For a good breakdown of per-query costs see Hannah Ritchie’s May 2025 and August 2025 write-ups; for the aggregate and local grid picture see her November 2024 piece. ↩

Would you like to help us build a better, greener and fairer future?

We're hiring smart people who are passionate about our mission.

- Static typing Python at scale - Our journey with mypy and Django

- Django, Kubernetes Health Checks and Continuous Delivery

- Working with async Django: lessons learned

- Making a major RabbitMQ version upgrade without breaking Celery ETA tasks

- Electrifying and flexing low-carbon domestic heating in the home

- Building an AI copilot to make on-call less painful

- Building the largest electric vehicle smart-charging virtual power plant in the world

- How we ship over 100 versions a day to over 25 environments in more than 10 countries

- Avoiding race conditions using MySQL locks

- Estimating cost per dbt model in Databricks

- Automating secrets management with 1Password Connect

- Understanding how mypy follows imports

- Optimizing AWS streams consumer performance

- Sharing power updates using Amazon EventBridge Pipes

- Using formatters and linters to manage a large codebase

- Our pull request conventions

- Patterns of flakey Python tests

- Integrating Asana and GitHub

- Durable database transactions in Django

- Python interfaces a la Golang

- Beware changing the "related name" of a Django model field

- Our in-house coding conventions

- Recommended Django project structure

- Using a custom Sentry client

- Improving accessibility at Octopus Energy

- Django, ELB health checks and continuous delivery

- Organising styles for a React/Django hybrid

- Testing for missing migrations in Django

- Hello world, would you like to join us?